Geeky Gadgets

The Latest Technology News

By

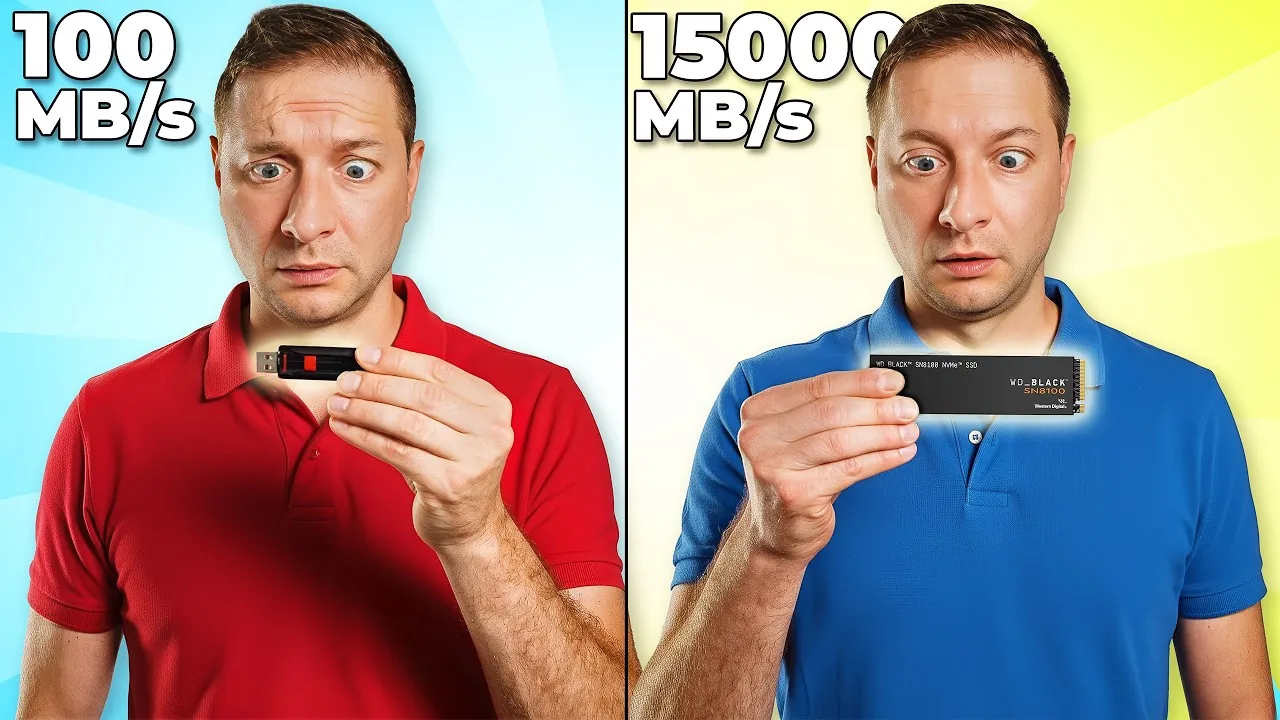

Imagine waiting nearly four minutes for a file to load, only to realize that a simple hardware upgrade could have reduced that time to under nine seconds. When it comes to working with large language models (LLMs), the choice of storage can feel like the difference between crawling through quicksand and sprinting on a track. These models, often requiring files as massive as 18 GB, demand storage solutions that can keep up with their scale. Yet, not all storage is created equal. From sluggish USB thumb drives to innovative SSDs with speeds exceeding 14,000 MB/s, the disparity in performance is staggering, and so are the consequences for your productivity. Could your storage setup be holding your LLM workflows hostage?

Below Alex Ziskind explores the dramatic impact of storage speed on LLM performance, breaking down how different devices, from basic USB drives to ultra-fast internal SSDs, perform under the weight of massive datasets. You’ll uncover not only the stark contrasts in loading times but also the hidden trade-offs between speed, capacity, and cost. Whether you’re a data scientist, developer, or tech enthusiast, this comparison will help you make informed decisions about optimizing your storage for smoother, more efficient operations. After all, when seconds add up to hours, choosing the right storage isn’t just a technical decision, it’s a fantastic option for your workflow.

TL;DR Key Takeaways :

A range of storage devices was tested to evaluate their impact on LLM performance. These devices varied widely in speed and technology, offering insights into how each type affects the loading of large files. The devices tested included:

The results revealed a stark contrast in performance. Slower devices caused significant delays, while faster options enabled near-instantaneous loading of the 18 GB LLM file. This demonstrates the critical role of storage speed in maintaining efficient workflows.

The tests provided clear evidence of how storage speed affects LLM performance. Below are the loading times recorded for an 18 GB file across different storage devices:

These findings highlight the importance of investing in high-speed storage solutions, particularly when working with large datasets. Faster storage not only reduces delays but also enhances overall productivity by allowing quicker access to critical data.

Stay informed about the latest in Large Language Models (LLMs) by exploring our other resources and articles.

While storage speed is a key determinant of LLM performance, other technical factors also play a significant role. Optimizing these elements can further enhance your workflow:

By addressing these considerations, you can create a more balanced and efficient system for handling LLM workloads.

To maximize the efficiency of your LLM workflows, consider implementing the following strategies:

By following these recommendations, you can streamline your workflows and ensure that your system is fully optimized for handling large language models.

The analysis underscores the critical role of storage speed in determining the efficiency of LLM workflows. Slow storage devices can introduce significant delays, hindering productivity and increasing frustration. On the other hand, high-speed solutions such as internal SSDs and DAS enable seamless model loading, reducing bottlenecks and improving overall performance. By carefully selecting and optimizing your storage configuration, you can unlock the full potential of your LLM applications and achieve smoother, more efficient operations.

Media Credit: Alex Ziskind

Disclosure: Some of our articles include affiliate links. If you buy something through one of these links, Geeky Gadgets may earn an affiliate commission. Learn about our Disclosure Policy.